Quantum computing is a cutting-edge computing paradigm that leverages the principles of quantum mechanics to perform certain types of computations much faster than classical computers. Unlike classical computers, which use bits as the fundamental unit of information (either 0 or 1), quantum computers use quantum bits, or qubits.

Key principles and concepts of quantum computing include:

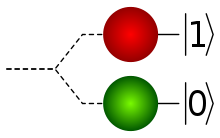

Superposition

A qubit can exist in a superposition of states, meaning it can represent both 0 and 1 simultaneously until measured. This property allows quantum computers to perform multiple calculations in parallel.

Entanglement

Qubits can become entangled, which means the state of one qubit is dependent on the state of another, even when they are physically separated. This property enables quantum computers to perform certain types of operations more efficiently than classical computers.

Quantum Gates

Quantum computers use quantum gates to manipulate qubits, similar to how classical computers use logic gates to manipulate bits. Quantum gates can perform operations that take advantage of superposition and entanglement.

Quantum Algorithms

Quantum algorithms are designed to take advantage of quantum properties to solve specific types of problems more efficiently than classical algorithms. Examples include Shor’s algorithm for integer factorization (which could potentially break current encryption methods) and Grover’s algorithm for unstructured search.

Quantum Speedup

Quantum computers have the potential to provide significant speedup for specific problems, such as cryptography, optimization, and simulating quantum systems. However, not all problems will benefit equally from quantum computing, and many everyday tasks will still be better suited for classical computers.

It’s important to note that building and maintaining quantum computers is extremely challenging due to issues like quantum decoherence (the loss of quantum properties over time) and error correction. Quantum computing has the potential to revolutionize fields such as cryptography, drug discovery, materials science, and more, but there are still many technical hurdles to overcome before it becomes a mainstream technology.